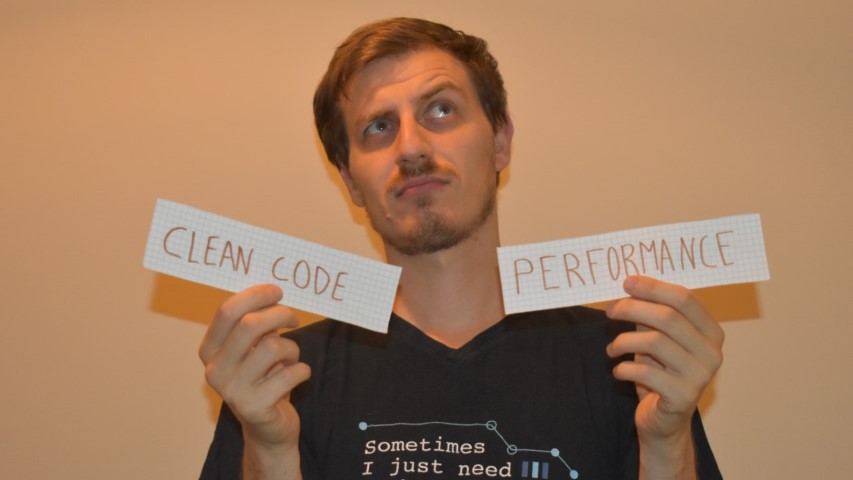

Code opinion: performance or clean code?

In any application, writing code that is clean and performant is crucial. But we often can’t have both. What to choose?

Table of Contents

Just a second! 🫷

If you are here, it means that you are a software developer. So, you know that storage, networking, and domain management have a cost .

If you want to support this blog, please ensure that you have disabled the adblocker for this site. I configured Google AdSense to show as few ADS as possible - I don't want to bother you with lots of ads, but I still need to add some to pay for the resources for my site.

Thank you for your understanding.

- Davide

A few weeks ago I had a nice discussion on Twitter with Visakh Vijayan about the importance of clean code when compared to performance.

The idea that triggered that discussion comes from a Tweet by Daniel Moka

Wrap long conditions!

A condition statement with multiple booleans makes your code harder to read.

The longer a piece of code is, the more difficult it is to understand.

It’s better to extract the condition into a well-named function that reveals the intent.

with an example that showed how much easier is to understand an if statement when the condition evaluation is moved to a different, well-named function, rather than keeping the same condition directly in the if statement.

So, for example:

if(hasValidAge(user)){...}

bool hasValidAge(User user)

{

return user.Age>= 18 && user.Age < 100;

}

is much easier to read than

if(user.Age>= 18 && user.Age < 100){...}

I totally agree with him. But then, I noticed Visakh’s point of view:

If this thing runs in a loop, it just got a whole lot more function calls which is basically an added operation of stack push-pop.

He’s actually right! Clearly, the way we write our code affects our application’s performance.

So, what should be a developer’s focus? Performance or Clean code?

In my opinion, clean code. But let’s see the different points of view.

In favor of performance

Obviously, an application of whichever type must be performant. Would you use prefer a slower or a faster application?

So, we should optimize performance to the limit because:

- every nanosecond is important

- memory is a finite resource

- final users are the most important users of our application

This means that every useless stack allocation, variable, loop iteration, should be avoided. We should bring our applications to the limits.

Another Visakh’s good point from that thread was that

You don’t keep reading something every day … The code gets executed every day though. I would prefer performance over readability any day. Obviously with decent readability tho.

And, again, that is true: we often write our code, test it, and never touch it again; but the application generated by our code is used every day by end-users, so our choices impact their day-by-day experience with the application.

Visakh’s points are true. But yet I don’t agree with him. Let’s see why.

In favor of clean code

First of all, let’s break a myth: end user is not the final user of our code: the dev team is. A user can totally ignore how the dev team implemented their application. C#, JavaScript, Python? TDD, BDD, AOD? They will never know (unless the source code is online). So, end users are not affected by our code: they are affected by the result of the compilation of our code.

This means that we should not write good code for them, but for ourselves.

But, to retain users in the long run, we should focus on another aspect: maintainability.

Given this IEEE definition of maintainability,

a program is maintainable if it meets the following two conditions:

• There is a high probability of determining the cause of a problem in a timely manner the first time it occurs,

• There is a high probability of being able to modify the program without causing an error in some other part of the program.

so, simplifying the definition, we should be able to:

- easily identify and fix bugs

- easily add new features

In particular, splitting the code into different methods helps you identify bugs because:

- the code is easier to read, as if it was a novel;

- in C#, we can easily identify which method threw an Exception, by looking at the stack trace details.

To demonstrate the first point, let’s read again the two snippets at the beginning of this article.

When skimming the code, you may incur in this code:

if(hasValidAge(user)){...}

or in this one:

if(user.Age>= 18 && user.Age < 100){...}

The former gives you clearly the idea of what’s going on. If you are interested in the details, you can simply jump to the definition of hasValidAge.

The latter forces you to understand the meaning of that condition, even if it’s not important to you - without reading it first, how would you know if it is important to you?

And what if user was null and an exception is thrown? With the first way, the stack trace info will hint you to look at the hasValidAge method. With the second way, you have to debug the whole application to get to those breaking instructions.

So, clean code helps you fixing bugs and then providing a more reliable application to your users.

But they will lose some ns because of stack allocation. Do they?

Benchmarking inline instructions vs nested methods

The best thing to do when in doubt about performance is… to run a benchmark.

As usual, I’ve created a benchmark with BenchmarkDotNet. I’ve already explained how to get started with it in this article, and I’ve used it to benchmark loops performances in C# in this other article.

So, let’s see the two benchmarked methods.

Note: those operations actually do not make any sense. They are there only to see how the stack allocation affects performance.

The first method under test is the one with all the operations on a single level, without nested methods:

[Benchmark]

[ArgumentsSource(nameof(Arrays))]

public void WithSingleLevel(int[] array)

{

PerformOperationsWithSingleLevel(array);

}

private void PerformOperationsWithSingleLevel(int[] array)

{

int[] filteredNumbers = array.Where(n => n % 12 != 0).ToArray();

foreach (var number in filteredNumbers)

{

string status = "";

var isOnDb = number % 3 == 0;

if (isOnDb)

{

status = "onDB";

}

else

{

var isOnCache = (number + 1) % 7 == 0;

if (isOnCache)

{

status = "onCache";

}

else

{

status = "toBeCreated";

}

}

}

}

No additional calls, no stack allocations.

The other method under test does the same thing, but exaggerating the method calls:

[Benchmark]

[ArgumentsSource(nameof(Arrays))]

public void WithNestedLevels(int[] array)

{

PerformOperationsWithMultipleLevels(array);

}

private void PerformOperationsWithMultipleLevels(int[] array)

{

int[] filteredNumbers = GetFilteredNumbers(array);

foreach (var number in filteredNumbers)

{

CalculateStatus(number);

}

}

private static void CalculateStatus(int number)

{

string status = "";

var isOnDb = IsOnDb(number);

status = isOnDb ? GetOnDBStatus() : GetNotOnDbStatus(number);

}

private static string GetNotOnDbStatus(int number)

{

var isOnCache = IsOnCache(number);

return isOnCache ? GetOnCacheStatus() : GetToBeCreatedStatus();

}

private static string GetToBeCreatedStatus() => "toBeCreated";

private static string GetOnCacheStatus() => "onCache";

private static bool IsOnCache(int number) => (number + 1) % 7 == 0;

private static string GetOnDBStatus() => "onDB";

private static bool IsOnDb(int number) => number % 3 == 0;

private static int[] GetFilteredNumbers(int[] array) => array.Where(n => n % 12 != 0).ToArray();

Almost everything is a function.

And here’s the result of that benchmark:

| Method | array | Mean | Error | StdDev | Median |

|---|---|---|---|---|---|

| WithSingleLevel | Int32[10000] | 46,384.6 ns | 773.95 ns | 1,997.82 ns | 45,605.9 ns |

| WithNestedLevels | Int32[10000] | 58,912.2 ns | 1,152.96 ns | 1,539.16 ns | 58,536.7 ns |

| WithSingleLevel | Int32[1000] | 5,184.9 ns | 100.54 ns | 89.12 ns | 5,160.7 ns |

| WithNestedLevels | Int32[1000] | 6,557.1 ns | 128.84 ns | 153.37 ns | 6,529.2 ns |

| WithSingleLevel | Int32[100] | 781.0 ns | 18.54 ns | 51.99 ns | 764.3 ns |

| WithNestedLevels | Int32[100] | 910.5 ns | 17.03 ns | 31.98 ns | 901.5 ns |

| WithSingleLevel | Int32[10] | 186.7 ns | 3.71 ns | 9.43 ns | 182.9 ns |

| WithNestedLevels | Int32[10] | 193.5 ns | 2.48 ns | 2.07 ns | 193.7 ns |

As you see, by increasing the size of the input array, the difference between using nested levels and staying on a single level increases too.

But for arrays with 10 items, the difference is 7 nanoseconds (0.000000007 seconds).

For arrays with 10000 items, the difference is 12528 nanoseconds (0.000012528 seconds).

I don’t think the end user will ever notice that every operation is performed without calling nested methods. But the developer that has to maintain the code, he surely will.

Conclusion

As always, we must find a balance between clean code and performance: you should not write an incredibly elegant piece of code that takes 3 seconds to complete an operation that, using a dirtier approach, would have taken a bunch of milliseconds.

Also, remember that the quality of the code affects the dev team, which must maintain that code. If the application uses every ns available, but it’s full of bugs, users will surely complain (and stop using it).

So, write code for your future self and for your team, not for the average user.

Of course, that is my opinion. Drop a message in the comment section, or reach me on Twitter!

Happy coding! 🐧